By Stu Sjouwerman, CEO, KnowBe4

As the online threats to organizations have grown over the past 10-15 years, security awareness training (SAT) has become a critical component of the security infrastructure deployed by IT departments to protect their networks from attacks by malicious actors, whether those attacks are driven by increasingly sophisticated phishing campaigns, voice-driven vishing schemes, or more traditional web-based scams.

The job of training unschooled, lay users to recognize when they are being targeted by malicious actors and how to respond properly is not as simple as it once was, though. In the early days of phishing, most advice centered around instructing users to look for errors in grammar, syntax, and spelling when dealing with emails requesting they click links and log in to spoofed online bank sites to address purported problems with their accounts. Then as now, some security-minded training even directed users to hover their mouse pointers over links to verify that the true destinations of those links matched the visible URL text.

Such advice is not nearly as useful as it once was, however. The bad guys now routinely use slickly designed email graphics to spoof well-recognized, trusted online brands coupled with text bodies lifted from legitimate emails, some of them harvested directly from the inboxes of ordinary corporate employees engaged in routine communication with colleagues, customers, clients, and partners. Moreover, with the rise of mobile devices, ordinary users are much less likely to be savvy enough to decipher increasingly complex URLs.

In response, security awareness training programs have developed more comprehensive and useful tips designed to enable ordinary users to deal with the widening array of social engineering schemes regularly deployed by malicious actors in phishing campaigns. Our own new-school security awareness training introduces users to 22 "red flags" that should raise alarms when they encounter them in potentially malicious emails.

As useful as these tips are, they often rely on users to exercise a properly skeptical degree of judgement. Many users will rise to the task. Others, however, may struggle. Consider, for example, advice to be on the lookout for email elements that are "very unusual or out of character." What strikes some users as "unusual" or "odd" may seem completely ordinary and unremarkable to others, especially if their job positions involve dealing with a firehose of email traffic from people outside the company.

Thankfully (or perhaps ironically), the bad guys can be of help. Although the malicious actors have noticeably improved the "believability" or design of their bread-and-butter phishing campaigns, they still struggle with quality control -- a veritable Achilles heel in their operation, given that phishing is still largely a volume business.

The bad guys screw up a lot more often than you might think. And regular employees can be trained to look for these errors, most of which are surprisingly simple to spot -- provided users know to look for them. And not only are they easy to spot, they are fairly unambiguous, requiring little judgement on the part of users to decipher.

In what follows we look at five common errors or "tells" that we see every day in phishing emails forwarded to use by customers using the Phish Alert Button (PAB).

- Brand Mis-Match

One of the more effective techniques used by the bad guys to trick unwitting users into clicking malicious links, opening malicious attachments, or coughing up login credentials to corporate accounts is to spoof familiar online brand names. In doing so, malicious actors leverage the trust users have invested in those brands, which can range from large online platforms like Google and Microsoft to more narrowly designed services like Docusign or Bank of America.

While many phishing emails might spoof legitimate emails from iconic online brands in their entirety -- the fake Google security alert that tricked 2016 Clinton campaign chairman John Podesta being one example -- plenty of other malicious emails take creative license with the names and logos of brands familiar to consumers. And in so doing, the bad guys often become sloppy.

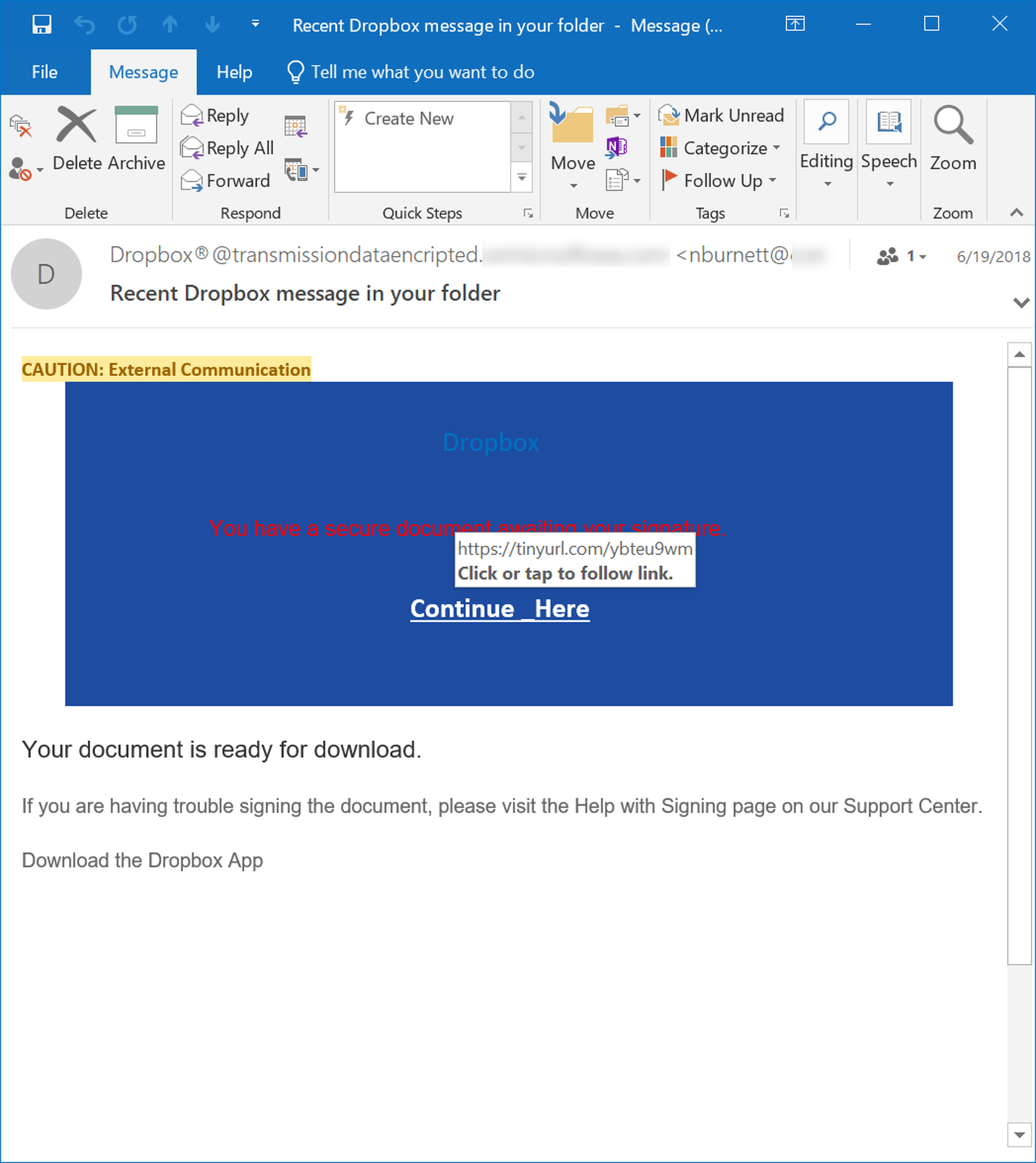

Take for example, this phishing email which clearly borrows graphical design elements familiar to anyone who has received an email from Docusign.

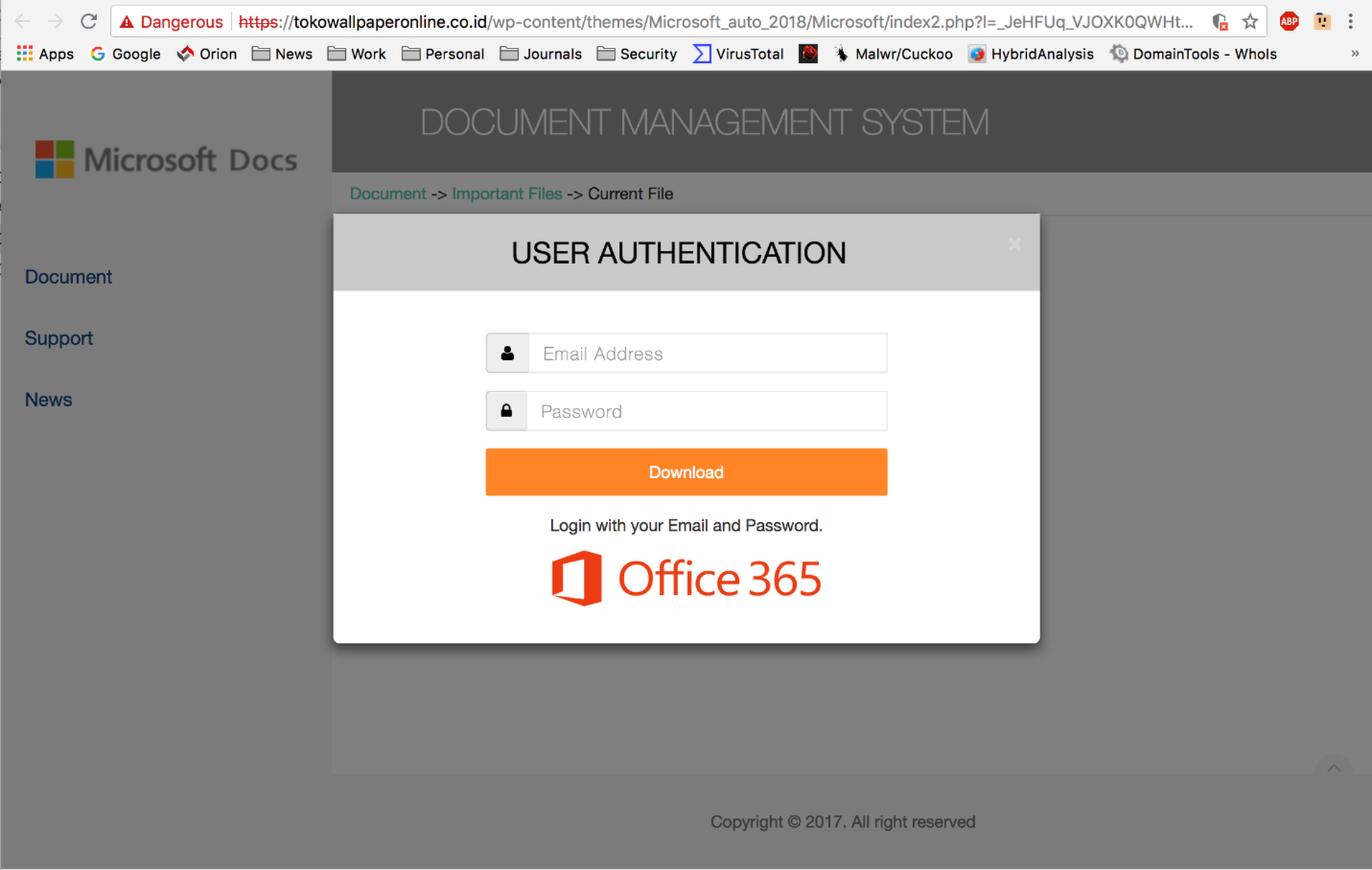

Although clearly intended to spoof a standard Docusign email, this phish gives away the game by labeling the main blue link box "Dropbox." To compound the problem, unwitting users who click the link will be greeted by an external web page that identifies itself as part of the "Microsoft Document Management System."

This unexpected mashup of brand names should alert any user who is paying attention that something is amiss.

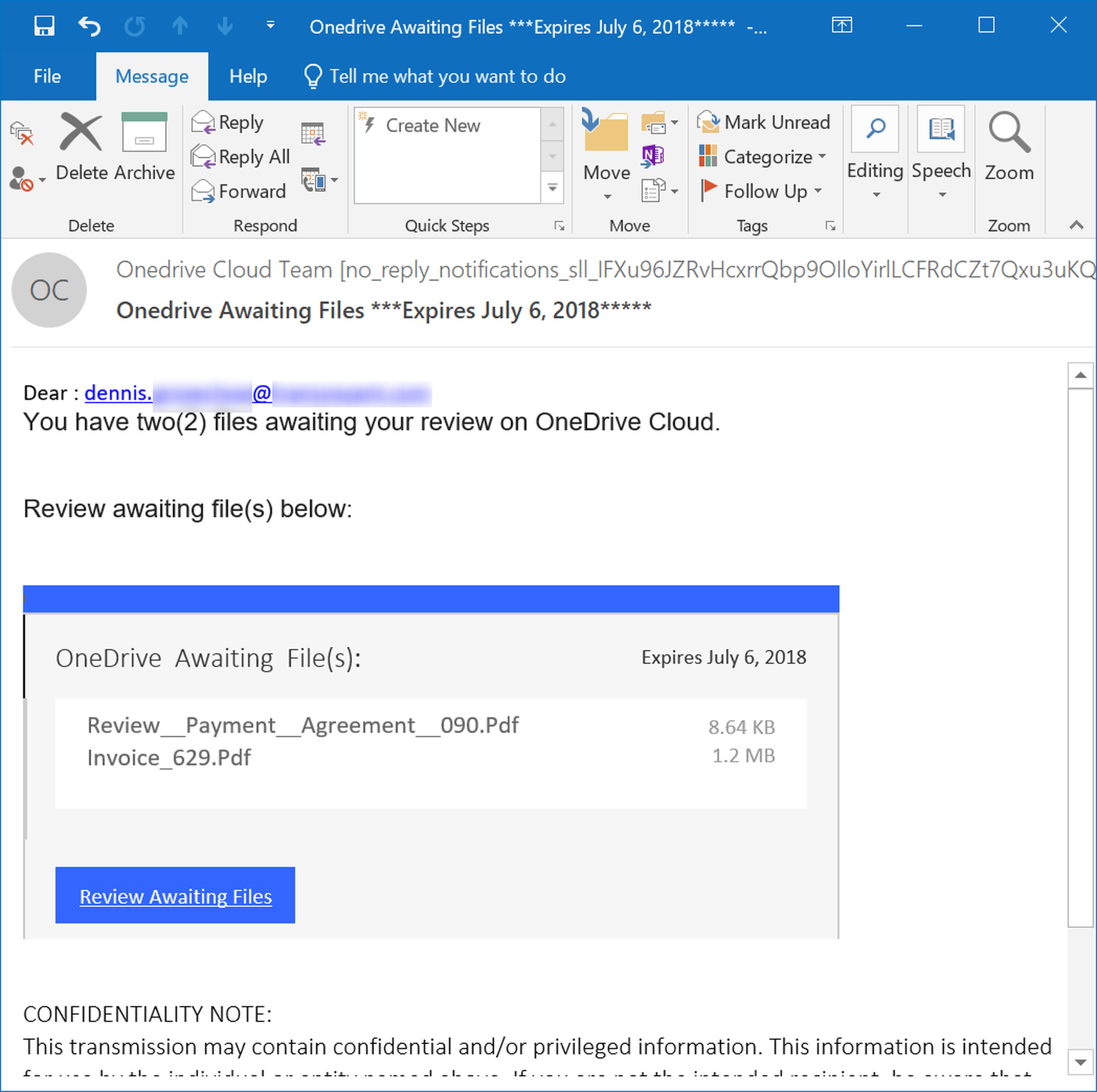

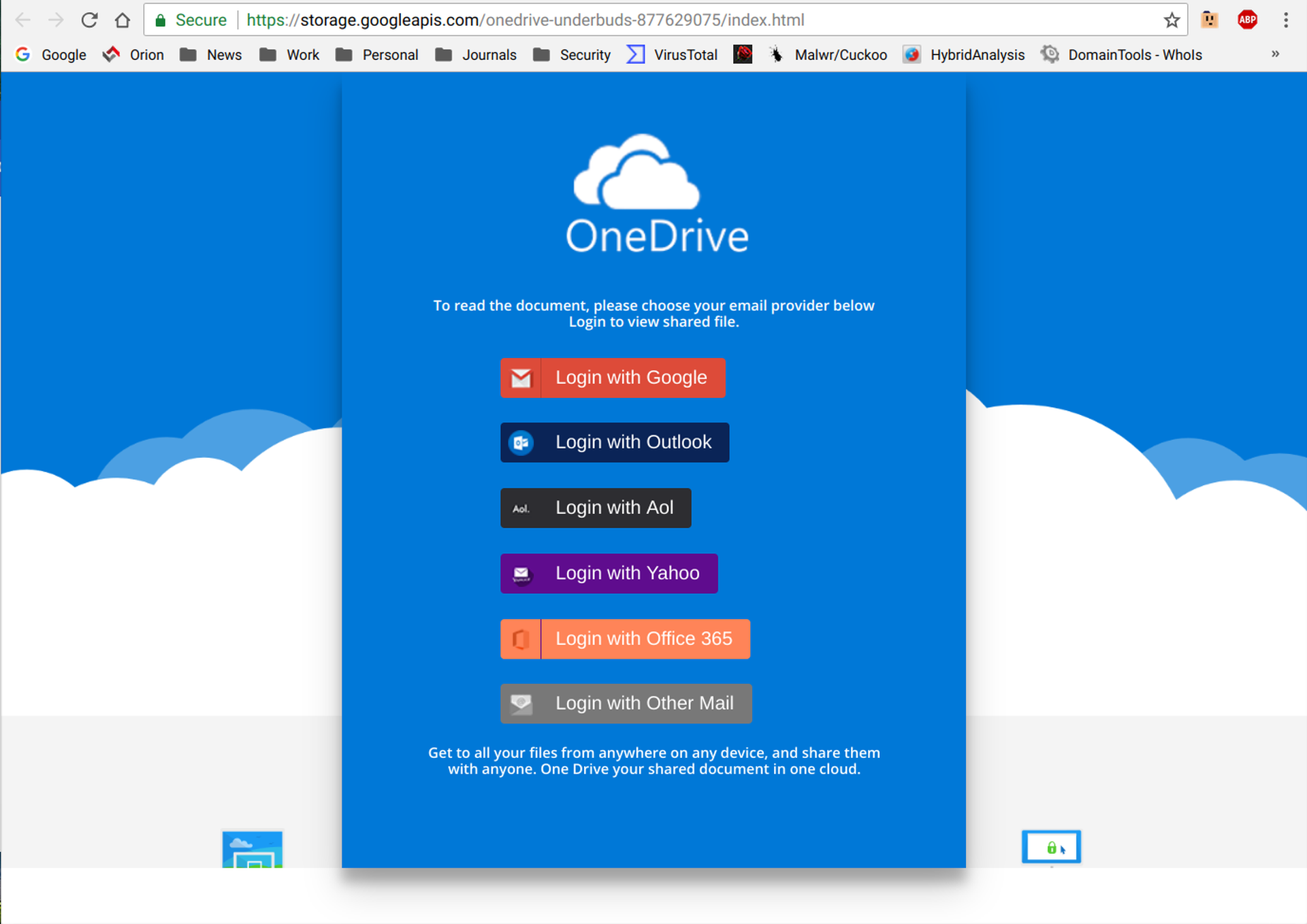

Sometimes the mismatch of brands and/or services requires a bit more attentiveness on the part of users. This phishing email claims to be delivering a file from OneDrive.

And while the web page opened by the link is prominently branded as OneDrive, eagled-eyed users should notice that the web page is hosted at Google Cloud Storage, a competing file storage service, not OneDrive.

The bad guys tend to treat brand names as trust marks, deploying them in a salt-and-pepper fashion with an eye towards gaining the confidence of gullible, inattentive users. In doing so, however, they all too often neglect to ensure that users navigating phishing schemes receive an internally consistent brand experience.

- Fake Attachments

Another error that users can be trained to spot arises not so much from sloppiness on the part of malicious actors, but their efforts to frustrate the ability of locally installed anti-virus scanners to detect malicious files. To do this the bad guys construct emails that appear to contain file attachments but in reality, deliver malicious links to files hosted on third-party servers.

These "fake attachment" emails will often instruct users to open attached files...

Users who hover their mouse pointers over the purported attachments should notice that they are actually links to external sites.

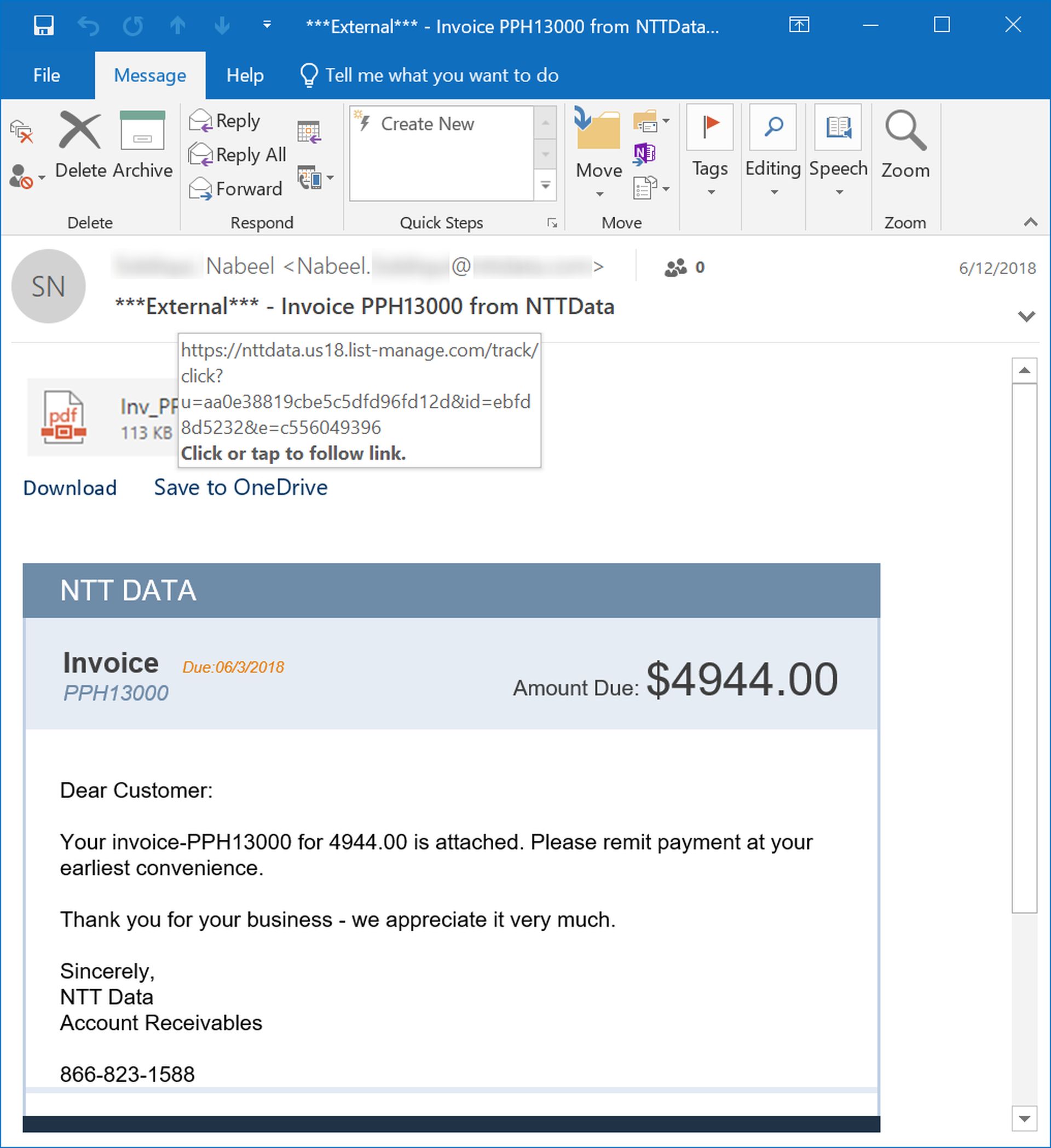

In this email, a fake attachment is coupled OneDrive branding...

Of course, the malicious link takes users to an external site that is most certainly not OneDrive, much less an attachment from OneDrive.

- Fake Text Blocks

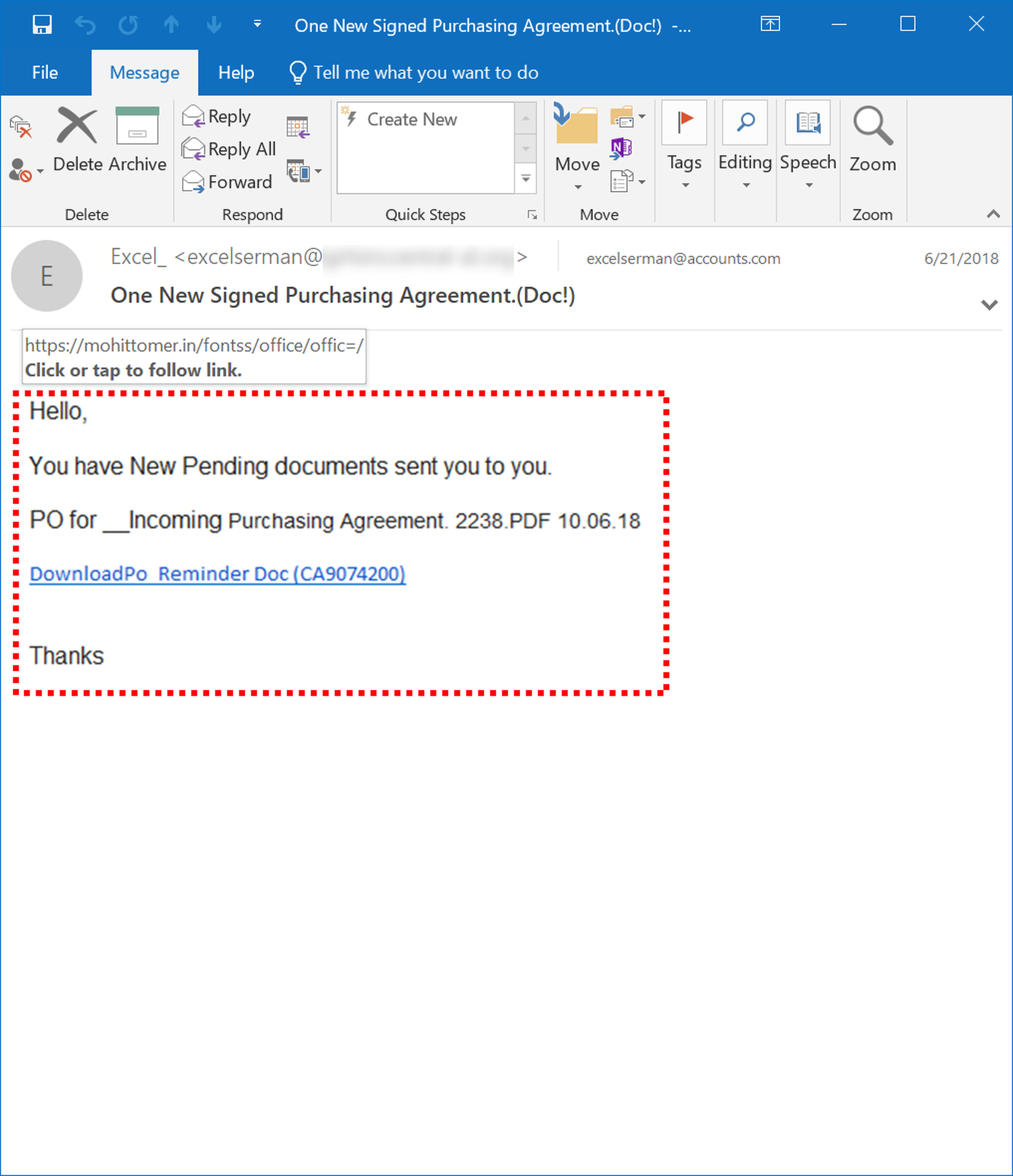

Another favorite technique of the bad guys is the use of fake text blocks -- disguised through cleverly constructed images -- designed to frustrate email security services that scan email bodies for key words and phrases that could indicate the email is a phish. For the purposes of this discussion, we have outlined the border of the image used in place of a standard email body text block.

Again, users who hover their mouse pointers over various parts of the email should notice that the underlying link is revealed not just over what appears to be the link text itself but over any part of the main email body.

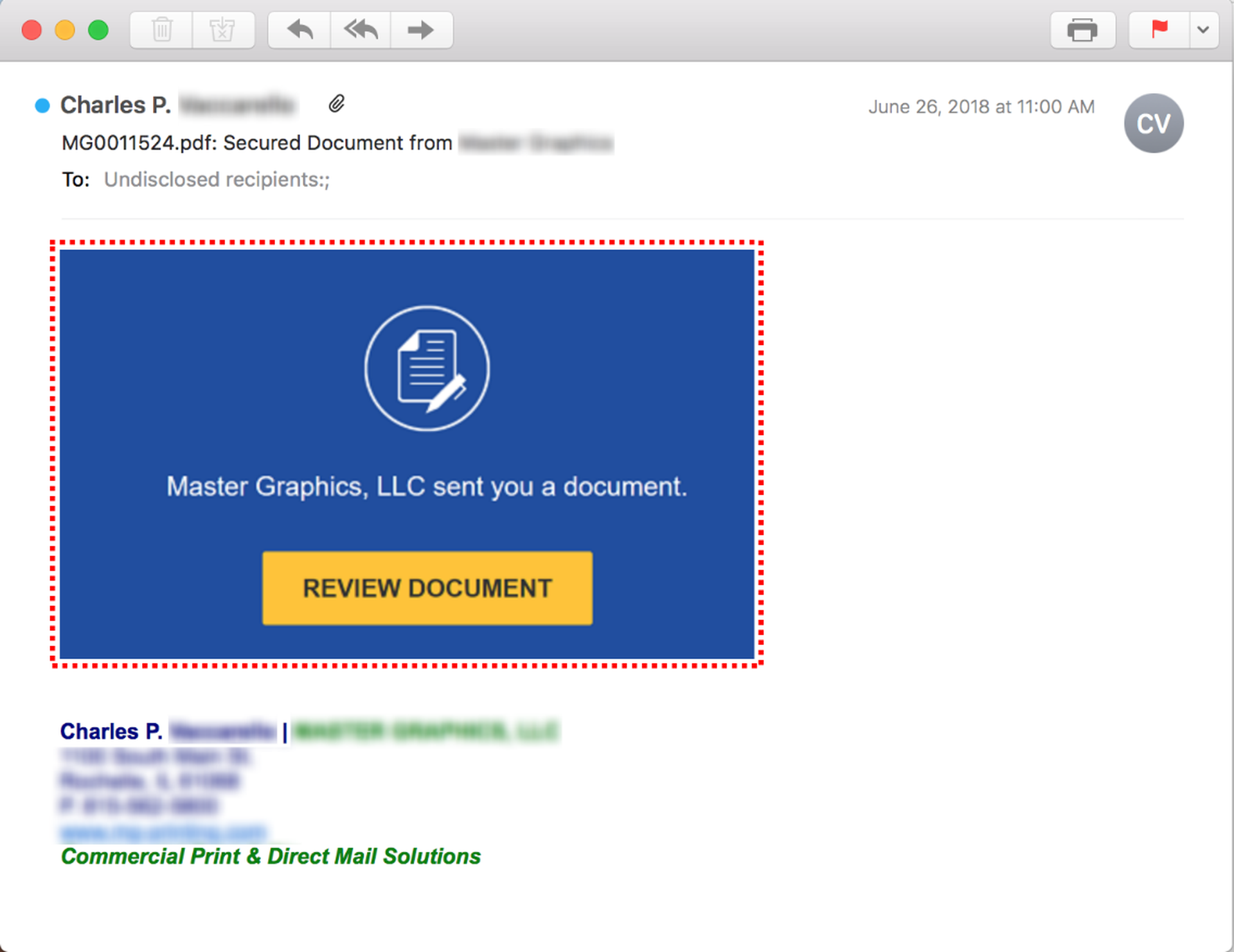

In some cases the bad guys will use an image that incorporates HTML-based graphical design elements in addition to text:

The beauty of these first three "tells" is that they do not require users to be especially savvy in deciphering URLs. If they can spot an illogical mashup of brand names or recognize when they are looking at a URL in a location that makes no sense, they can use these common errors to flag phishing emails.

The final two "tells," however, do require users to be minimally proficient at making sense of URLs. Thus, some users may need a basic introduction to URLs to make use of these phish detection techniques.

- Wordpress & Blogspot Links

Wordpress and Blogspot sites have long been favorites of malicious actors intent on delivering malicious content to unsuspecting users. These sites are often poorly maintained, lending themselves to hacking and compromise by the bad guys. Still further, their predictable internal file structure means that malicious actors can craft bundles of malicious content that are easily dropped in or inserted into existing, published websites.

While Wordpress and Blogspot web pages are perfectly serviceable options for bad actors looking to spring malicious payloads on lazy, inattentive web surfers, these sites -- and the links they generate -- are more problematic when deployed as part of email-driven phishing schemes.

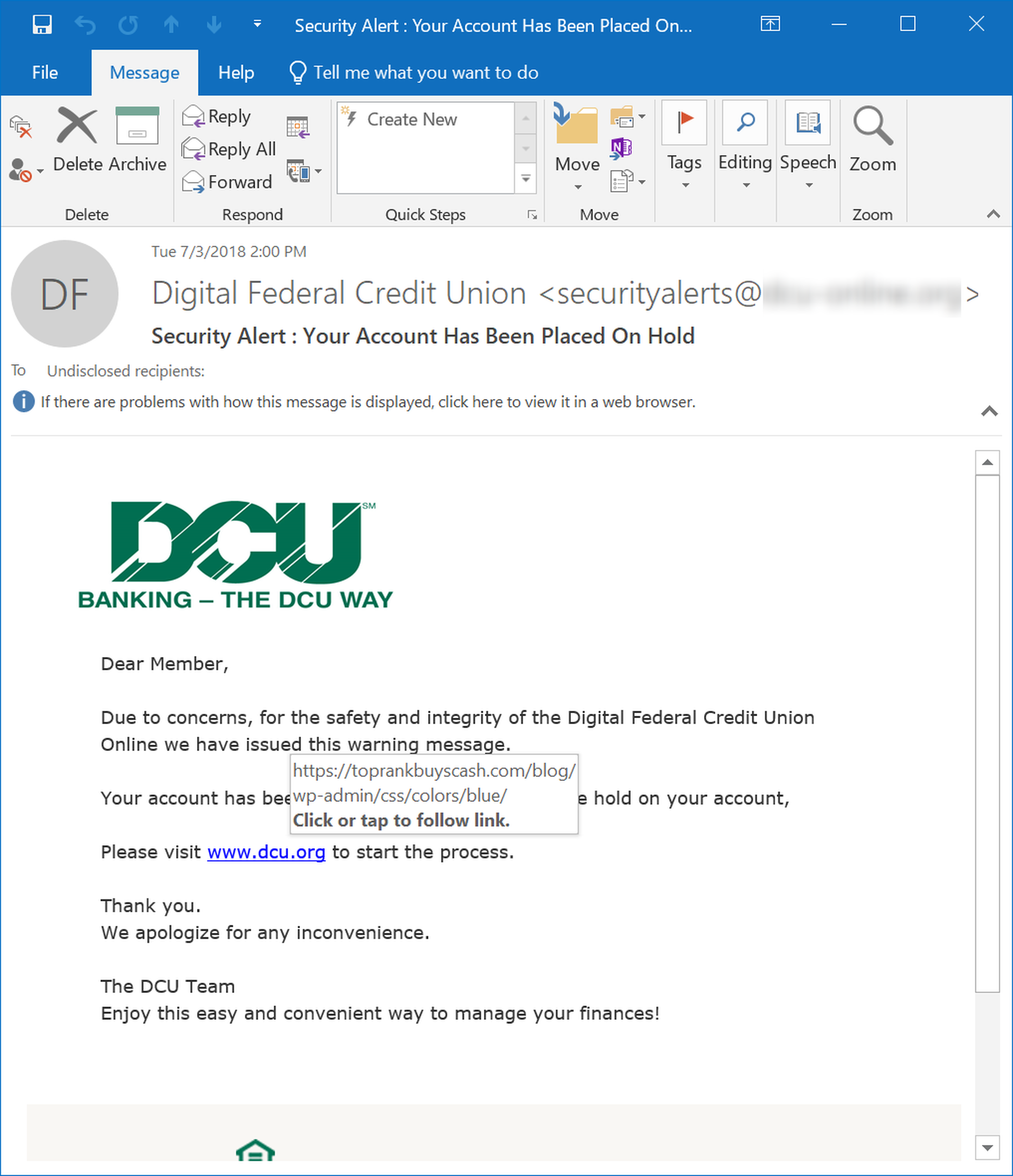

Consider the following email, which purports to be a security warning from Digital Federal Credit Union urging users to click a link to resolve a safety/integrity issue:

The key component in the provided link is the second part following the domain: blogw-admincsscolorsblue, which clearly indicates that the link points to a Wordpress site. While it is entirely possible that users may not recognize the domain used by a financial institution given that some institutions use third-party organizations to provide services to their customers, it is extremely unlikely that this kind of security-related email notice from a bank or credit union would be directing customers to a Wordpress site.

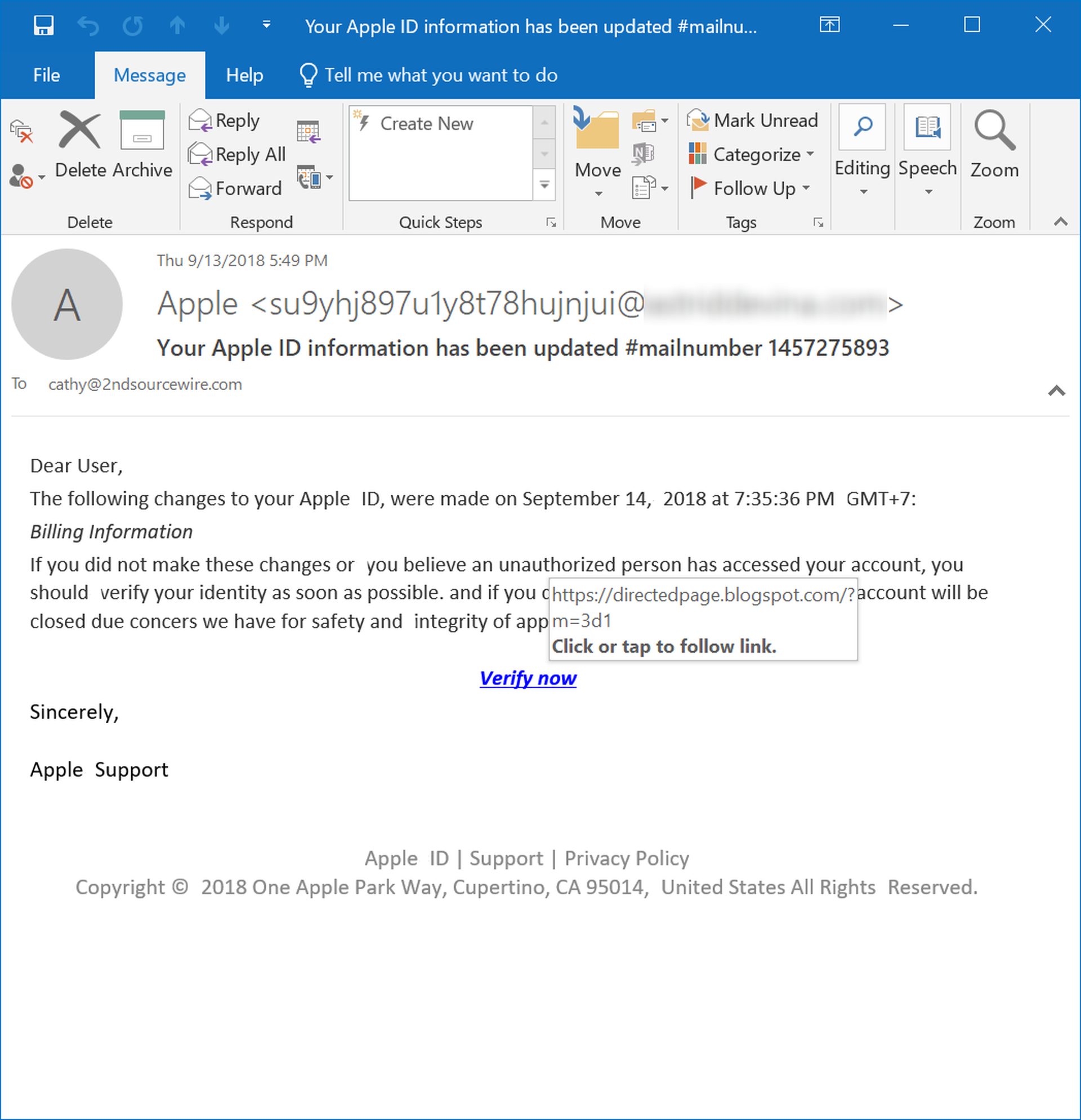

Similarly, when Apple sends users a notice regarding the security of their AppleIDs, it is nearly impossible that the company would be providing a verification link pointing to a Blogspot site, as this phishing email does:

Again, to be on the alert for these kinds of incongruities, users need to be trained in the basics of URLs. They will also need to be diligent and patient enough to check into the finer details of URLs. Nonetheless, this kind of discrepancy can be a powerful tool in the hands of users who are so inclined.

- URL Shorteners

Just as the bad guys resort to fake attachments and text blocks to thwart anti-virus and other security scanners, they also make use of URL shorteners such as bit.ly to disguise the true destination of malicious links from security software as well as end users.

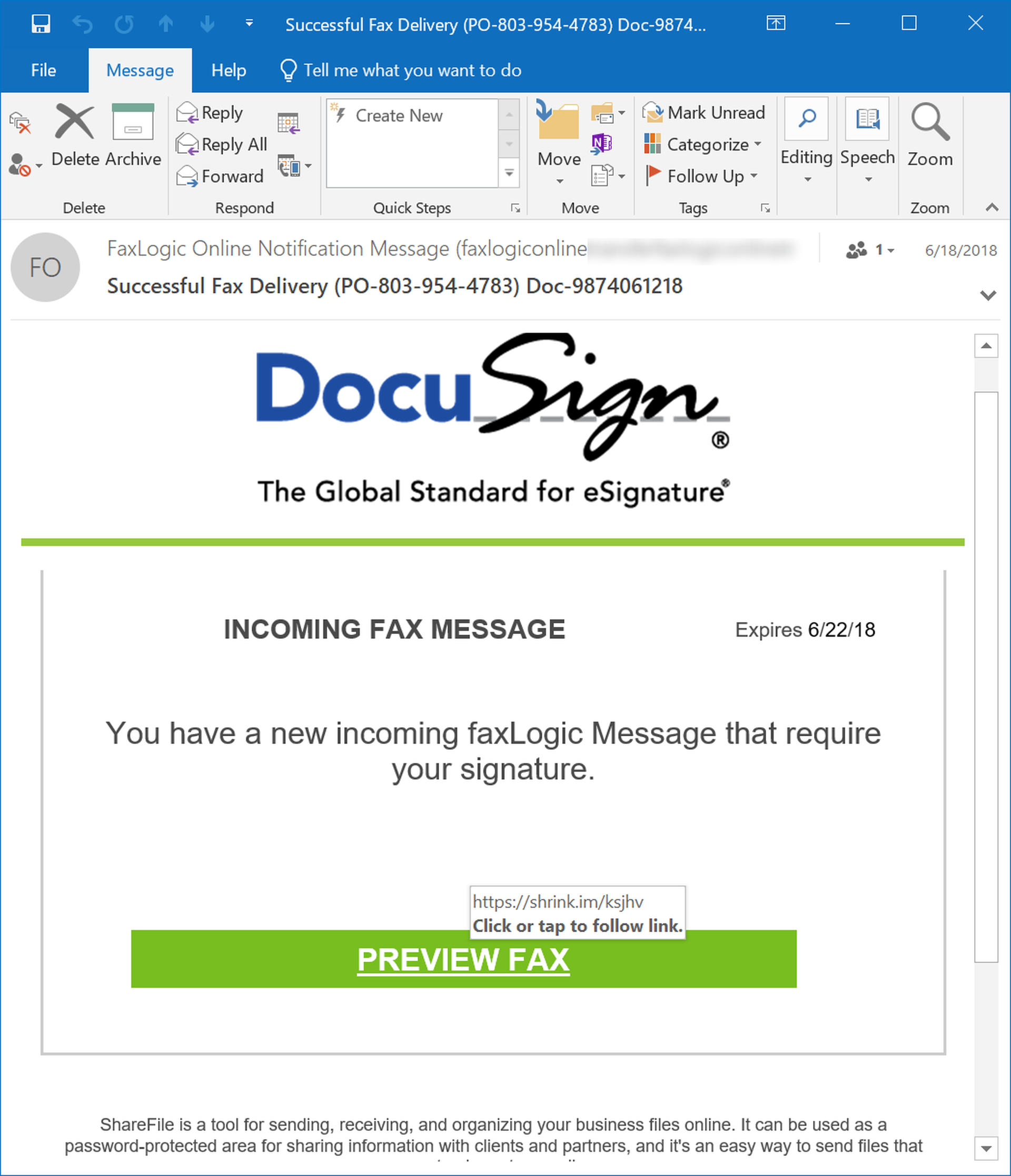

URL shorteners are ubiquitous these days, particularly on social media and web sites designed for consumption on mobile devices. While users may come to expect the use of URL shorteners in social media or web pages that provide links to other online articles, they should not expect to encounter URL shorteners when receiving links to documents delivered for signature through services like FaxLogic...

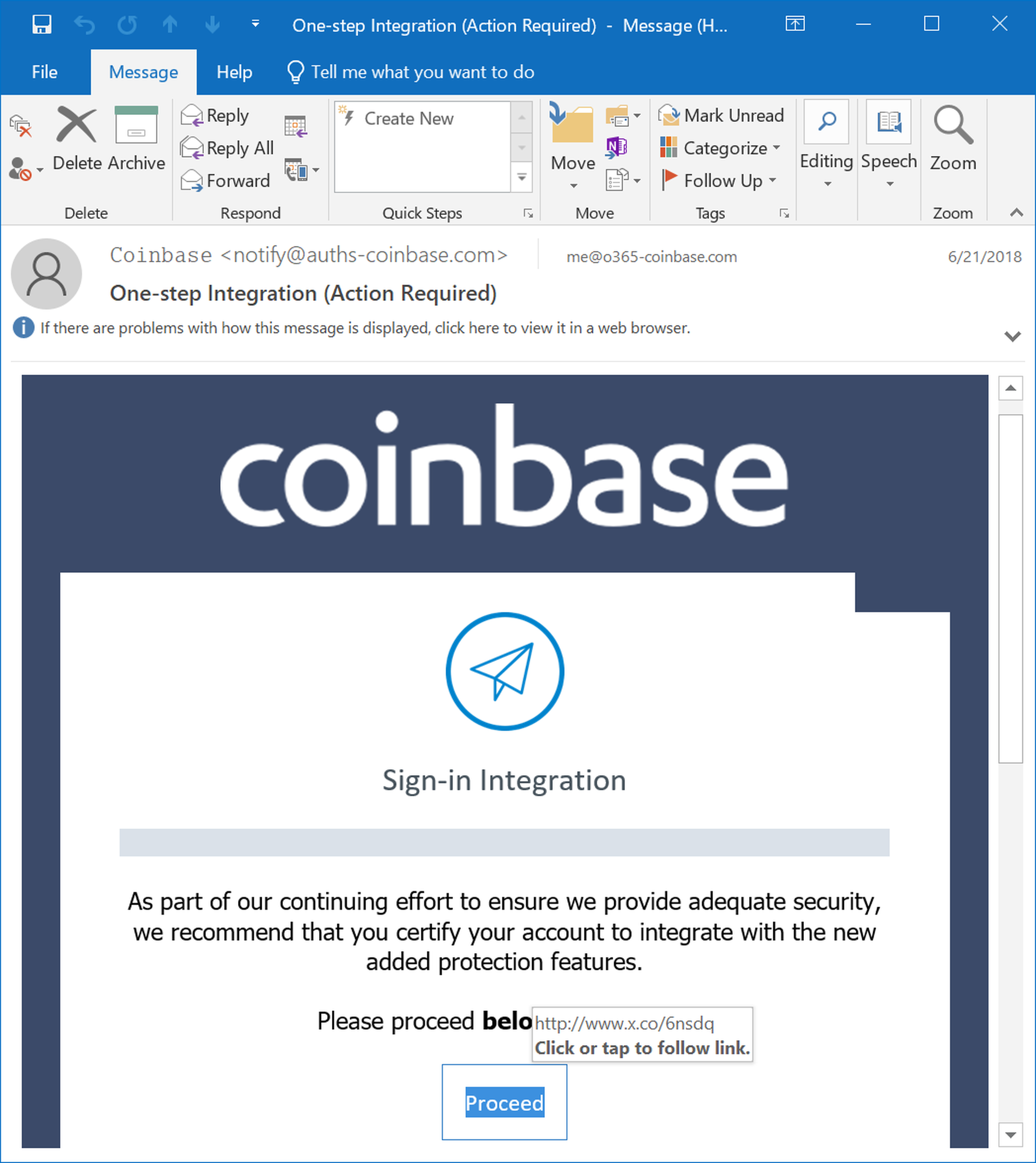

...or even when dealing with security verification messages from digital currency services like Coinbase:

Put simply, context matters when dealing with URL shorteners. While the use of URL shorteners may be perfectly normal an unremarkable in one context, in other contexts they ought to be read as a sign of deception.

Conclusion

The bad guys are not infallible. Sometimes they simply ignore basic quality control when constructing phishing campaigns aimed at office-based end users. Other times they become so focused on deceiving security scanners that they leave obvious "tells" in the emails that land in employees' inboxes.

Either way, these errors can be leveraged by users to sniff out potentially malicious emails and web pages. Users need to be trained to look for these "tells," though. And simply conducting ad hoc training with a PowerPoint deck in the break room over a couple of boxes of doughnuts will not equip your users with the knowledge they need to go toe-to-toe with the bad guys -- even bad guys who are having a bad day themselves.

In a high stakes threat environment that puts your organization's reputation and security on the line, your users need new-school security awareness training that delivers useable advice for spotting malicious emails and that allows you to test your employees on a regular basis to verify that they remain vigilant when dealing with the threats that lurk in their inboxes on a daily basis.